Overview

What this project was about

WAE (WAN Automation Engine) is a network analysis application used by Internet Service Providers to operate outage-free networks. The tool helps simulate traffic with actual data, forecast issues, and suggest optimisation methods. The existing experience lacked usability, resulting in repeated customer complaints and losses to competitors. I led the redesign of the WAE Collector — the critical data collection module at the heart of the product.

The Challenge

The Problem

Three major usability failures were costing Cisco deals. First, the CLI-only installation required users to manually verify dependencies — storage, RAM, OS, and frameworks — with no in-product guidance. Second, users struggled to gather the required network access information and device details upfront, leading to failed collection runs. Third, the Composer flow made no sense to new users: data types had strict sequential dependencies that were never communicated, forcing users to "go back and forth to figure out these steps, which is frustrating to them."

Discovery & Research

The 20-day discovery phase combined multiple research methods to build a complete picture. I conducted a heuristic review of the entire application, ran user testing with Technical Management Engineers (TMEs) encountering the tool for the first time, and analysed product wikis, help documentation, technical guides, and presentations. Testing across multiple locations helped us identify recurring patterns rather than one-off edge cases.

Three primary personas emerged: Network Architects who analyse current operations and scalability issues, Network Engineers who reconfigure networks for optimal performance, and NOC Technicians who perform regular analysis and error resolution. Each had distinct mental models about what information they needed and when.

The Core Insight: Sequencing Matters

A critical finding was that network data collection is inherently sequential — and the product never communicated this. Basic topology must be collected before additional layers (VPN, BGP, LSP). Traffic demand data depends on traffic data being present first. External scripts can be injected at multiple phases. XTC topology format determines the LSP format downstream. Users had no way to know any of this from the UI alone.

Three Design Directions Explored

Option 1 — Stepper Approach: Collectors grouped in sequential segments, guiding users step by step through data collection to scheduling. Clear on sequencing but too rigid for experienced users who already knew what they needed.

Option 2 — Flexible Configuration: All data options listed by group; users select only what they need and arrange items on a scheduler page. Maximum flexibility, but placed the sequencing burden back on users who already found it confusing.

Option 3 — Hybrid Approach (selected): Users choose the collectors they need, configure only the relevant options, and a horizontal stepper enforces proper sequence automatically. This respected user intent while preventing sequencing errors.

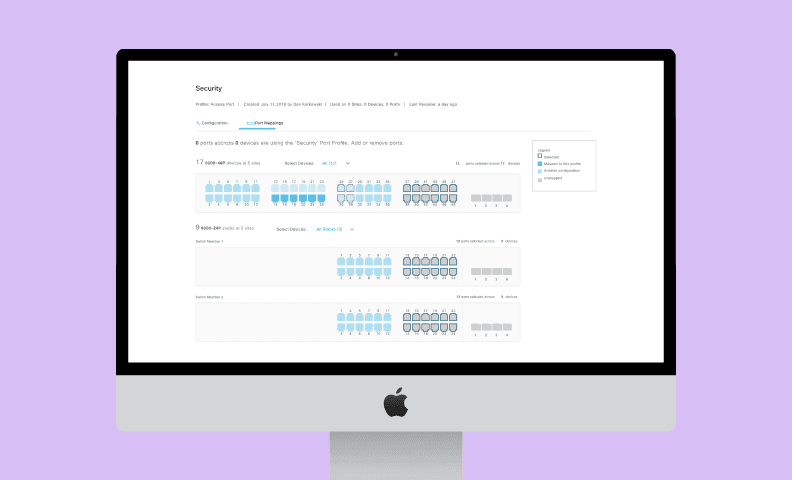

Final Mockups

Wireframes were prioritised before mockups — a conscious decision to focus stakeholder review on flow and function rather than visual polish. Once the structure was validated with the team and TMEs, we moved into high-fidelity mockups and prototypes using the Cisco design system. Option 3 received the strongest consensus from both the design team and subject matter experts.

“Users need to go back and forth to figure out these steps, which is frustrating to them.”

Results

Outcomes & Impact

Reflection

What I learned

The hybrid stepper approach taught me that the best solutions often sit between extremes. Too rigid (Option 1) alienates power users; too flexible (Option 2) overwhelms new users. By making the sequencing automatic while preserving user choice, we solved for both. I'd apply this principle to future designs: ask where the false binary is hiding, and explore the constraint-respecting middle ground. Wireframes before mockups remains invaluable for stakeholder alignment—visual hierarchy doesn't matter if the structure is wrong.

Interested in working together?

I'm available for freelance design work, consulting, and speaking opportunities.

Get in Touch